Previously in my Home Lab series, I described how my home lab Kubernetes clusters runs with a DHCP CNI–all pods get an IP address on the same layer 2 network as the rest of my home and an IP from DHCP. This enabled me to run certain software that needed this like Home Assistant which wanted to be able to do mDNS and send broadcast packets to discover device.

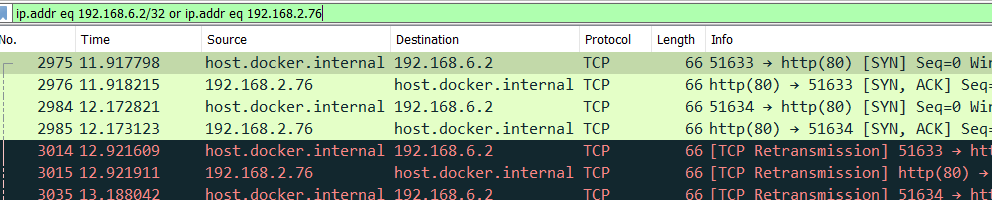

However, not all pods actually needed to be on the same layer 2 network and lead to a few situations where I ran out of IP addresses on the DHCP server and couldn’t connect any new devices until reservations expired: