I’ve been slowly reducing the amount of data shared with Google. I’ve been using Google Location History since 2013. I found it really useful just because I could figure out what restaurant I went to when I was traveling or any number of things.

I found OwnTracks which was an open-source location history storage solution. It’s not nearly as polished as Google Maps where it natively integrates your location history, but step one is owning my data, step 2 can be better UIs.

In this post, I’m going to walk through exactly how to get data from Google Location History and import it into OwnTracks

Setup OwnTracks

Feel free to follow the guides here to setup the OwnTracks recorder and UI.

Exporting from Google

- https://takeout.google.com/

- Deselect all, select Google location History

- Export once, file size: 4GB

- Wait for it to export, then download and extract the .zip file

Importing into OwnTracks

I first tried to follow this guide which uses this importer in the OwnTracks/recorder repository. My exported JSON file was 2GB, and it took hours to import and the MQTT importer seemed to have dropped a few records here and there.

Instead, let’s try to import it directly and bypass MQTT. I opened up OwnTrack’s /store folder and checked out the file format:

1

2

3

4

| ls /[...]/rec/user/phone# head 2023-01.rec

2023-01-01T00:00:52Z * {"_type":"location","tid":"fl","tst":1672531252,"lat":47.12345,"lon":-122.12345,"acc":13,"alt":123,"vac":4}

2023-01-01T00:01:52Z * {"_type":"location","tid":"fl","tst":1672531312,"lat":47.12345,"lon":-122.12345,"acc":13,"alt":123,"vac":4}

[...]

|

The file was plain-text and appeared easy to generate. I created a Python function that loaded data from /data/Records.json then generated the text files to /data/location/yyyy-mm-.rec.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

| import pandas as pd

import json

import time

df_gps = pd.read_json('/data/Records.json', typ='frame', orient='records')

print('There are {:,} rows in the location history dataset'.format(len(df_gps)))

df_gps = df_gps.apply(lambda x: x['locations'], axis=1, result_type='expand')

df_gps['latitudeE7'] = df_gps['latitudeE7'] / 10.**7

df_gps['longitudeE7'] = df_gps['longitudeE7'] / 10.**7

df_gps['timestamp'] = pd.to_datetime(df_gps['timestamp'])

owntracks = gps.rename(columns={'latitudeE7': 'lat', 'longitudeE7': 'lon', 'accuracy': 'acc', 'altitude': 'alt', 'verticalAccuracy': 'vac'})

owntracks['tst'] = (owntracks['timestamp'].astype(int) / 10**9)

files = {}

for year in range(years['min'], years['max'] + 1):

for month in range(1, 13):

files[f"{year}-{month}"] = open(f"/data/location/{year}-{str(month).rjust(2, '0')}.rec", 'w')

try:

for index, row in owntracks.iterrows():

d = row.to_dict()

record = {

'_type': 'location',

'tid': 'aj'

}

record['tst'] = int(time.mktime(d['timestamp'].timetuple()))

for key in ['lat', 'lon']:

if key in row and not pd.isnull(row[key]):

record[key] = row[key]

for key in ['acc', 'alt', 'vac']:

if key in row and not pd.isnull(row[key]):

record[key] = int(row[key])

timestamp = row['timestamp'].strftime("%Y-%m-%dT%H:%M:%SZ")

line = f"{timestamp}\t* \t{json.dumps(record, separators=(',', ':'))}\n"

files[f"{d['timestamp'].year}-{d['timestamp'].month}"].write(line)

finally:

for key, file in files.items():

file.flush()

file.close()

|

After it generates the data, find your OwnTracks data directory. The copy the output into /{owntracks_store_dir}/store/{user}/{device} folder. If you replace any existing data you will lose data recorded from Opentracks itself, so I would recommend downloading the Google Location History data first, converting, importing, then start collecting data afterwards.

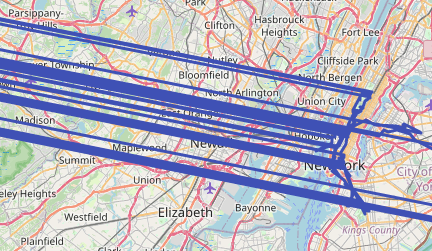

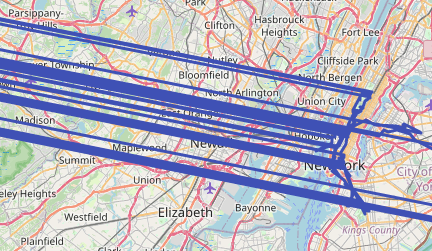

When I loaded this, the history was crazy showing me moving in and out of a city instantly:

Turns out, multiple devices on my accounts were reporting location so it appeared that I kept moving between two cities. Easy enough to exclude the device.

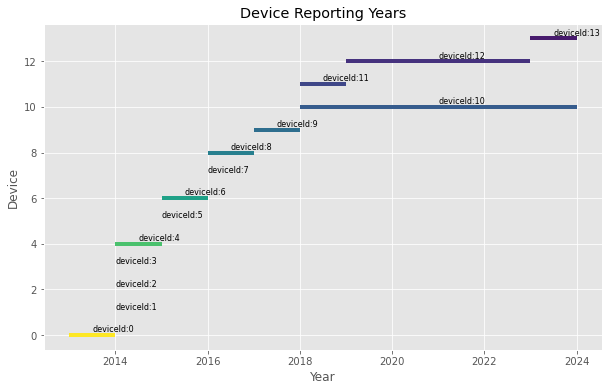

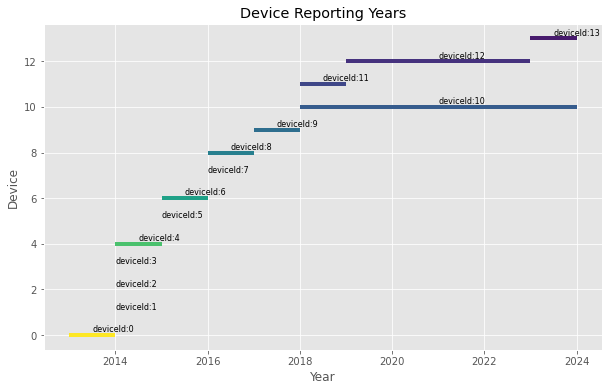

The following script produces a chart that shows when devices are reporting their location:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

| gps.sort_values(by='timestamp', inplace=True)

devices = gps['deviceTag'].unique()

plt.figure(figsize=(10, 6))

colors = plt.cm.viridis_r([i / len(devices) for i in range(len(devices))])

for i, device in enumerate(devices):

device_data = gps[gps['deviceTag'] == device]

first_year = device_data['timestamp'].dt.year.min()

last_year = device_data['timestamp'].dt.year.max()

middle_year = (first_year + last_year) / 2

plt.hlines(y=i, xmin=first_year, xmax=last_year, color=colors[i], linewidth=4)

plt.text(middle_year, i + 0.25, i, verticalalignment='center', fontsize=8)

plt.xlabel('Year')

plt.ylabel('Device')

plt.title('Device Reporting Years')

plt.grid(True)

plt.show()

|

With this I can identify which device is reporting in parallel. In my case, it’s the 10th device that’s reporting in parallel with my phone. Note that I’ve replaced the device ids, yours will be a long random number:

From there, I filtered out this device from the location history

1

2

3

4

5

| +excluded_devices = [1234510]

owntracks = gps.rename(columns={'latitudeE7': 'lat', 'longitudeE7': 'lon', 'accuracy': 'acc', 'altitude': 'alt', 'verticalAccuracy': 'vac'})

owntracks['tst'] = (owntracks['timestamp'].astype(int) / 10**9)

+owntracks = owntracks[~owntracks['deviceTag'].isin(excluded_devices)]

|

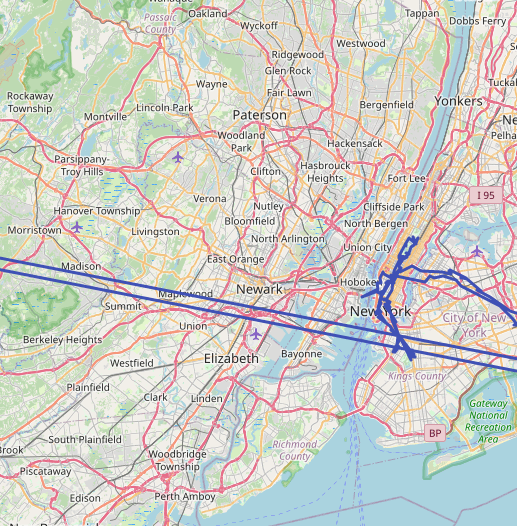

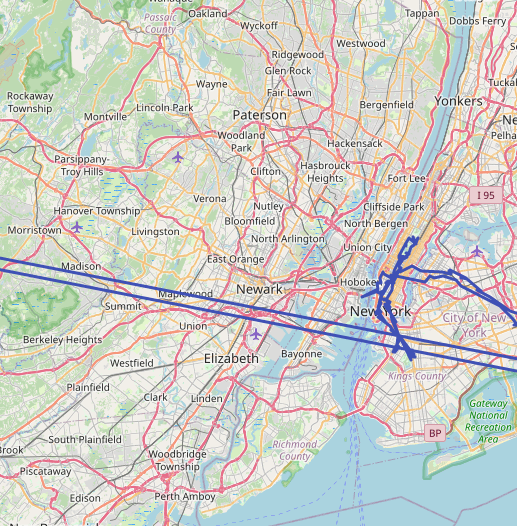

And the history looks a lot better:

Adding new data

Now, once you have your historical data, what do you do for new data moving forward? There’s a lot of options depending on personal preference including:

- Using the OwnTracks mobile apps

- Copying location from Home Assistant like here. This is the method that I use.

Conclusion

OwnTracks is an open-source place to store your location history. You can import existing history from Google Maps, then report new data into OwnTracks and have a localized copy of your location history.

Comments

Comments are currently unavailable while I move to this new blog platform. To give feedback, send an email to adam [at] this website url.